Daily log archive for Feb 2026. Go to the current daily log, or browse the archive index.

Subscribe to the weekly email digest. Check sample email out.

2026-02-24

Nobody's ever ready

The Imperfectionist: Nobody's ever ready #anxiety #ai

Nothing better than a fresh newsletter edition from Oliver Burkeman to restart logging after a few weeks of intermittent logging due to work travel and Berlinale binge movie watching.

And even he has decided to address AI in his latest.

I’m not going to link to any of these contagious anxiety-spreading pieces, for the same reason I don’t go around actively sneezing in people’s faces when I catch a cold. But it’s fair to say I find the topic a little triggering. Because this basic stance toward life – the anxious attempt to scramble to a place of psychological safety, to avoid being condemned to disaster and cast into the void – goes back a long way with me. So it all feels rather personal, and important for me to say that you don’t, actually, have to live like this. It won’t make you happier. It probably won’t even aid your career. You have the option of living with vastly more creativity and calm than the anxiety-merchants would have you believe – provided you can summon the strength of mind to screen them out.

The stomach-clench of anxiety isn’t anything like that. Rather, it emerges from the feeling that reality poses a fundamental threat to your security, so that hypervigilance and constant effort will be required to forestall annihilation. It implies that it’ll be very difficult indeed to make it to safety (with the corollary that if you fail, it’ll be because you didn’t try hard enough).

The reason “you’re not ready for what’s coming next”, in other words, is that we’re never ready for what’s coming next. To quote the splendid title of a book on Jewish spirituality by Alan Lew, This is Real and You Are Completely Unprepared. “This” being, of course, the human condition – not the latest subscription product with which OpenAI or Anthropic hope to justify their wild valuations.

Deming vs Drucker

Objectives and constraints – Surfing Complexity #management #organizations

I am also wholeheartedly in the Deming camp

Deming was vehemently opposed to management by objective. Rather, he saw an organization as a system. If you wanted to improve the output of a system, you had to study it to figure out what the limiting factor was. Only once you understood the constraints that limited your system, could you address them by changing the system.

…

I’m in Deming’s camp, but I can understand why Drucker won. Drucker’s approach is much easier to put into practice than Deming’s. Specifically, Drucker gave managers an explicit process they could follow. On the other hand, Deming…, well, here’s a quote from Deming’s book Out of the Crisis:

Eliminate management by objective. Eliminate management by numbers, numerical goals. Substitute leadership.

I can see why a manager reading this might be frustrated with his exhortation to replace a specific process with “leadership”. But understanding a complex system is hard work, and there’s no process that can substitute for that. If you don’t understand the constraints that limit your system, how will you ever address them?

Ironically, I found this via a followup blog entry by the same author where he admits defeat 🙃: Poor Deming never stood a chance – Surfing Complexity

The Film Students Who Can No Longer Sit Through Films

The Film Students Who Can No Longer Sit Through Films - The Atlantic #attention #crisis

This article struck me especially because I am just coming off a ~25-movie binge at Berlinale. It helped that I was watching it in a movie theater, but I am still glad I retain the attention span to got me through most movies. If anything, the reason I found it hard and occasionally snoozed off in a movie was more due to fatigue than due to inattention.

Everyone knows it’s hard to get college students to do the reading—remember books? But the attention-span crisis is not limited to the written word. Professors are now finding that they can’t even get film students—film students—to sit through movies. “I used to think, If homework is watching a movie, that is the best homework ever,” Craig Erpelding, a film professor at the University of Wisconsin at Madison, told me. “But students will not do it.”

I heard similar observations from 20 film-studies professors around the country. They told me that over the past decade, and particularly since the pandemic, students have struggled to pay attention to feature-length films. Malcolm Turvey, the founding director of Tufts University’s Film and Media Studies Program, officially bans electronics during film screenings. Enforcing the ban is another matter: About half the class ends up looking furtively at their phones.

The professors I spoke with didn’t blame students for their shortcomings; they focused instead on how media diets have changed. From 1997 to 2014, screen time for children under age 2 doubled. And the screen in question, once a television, is now more likely to be a tablet or a smartphone. Students arriving in college today have no memory of a world before the infinite scroll. As teenagers, they spent nearly five hours a day on social media, with much of that time used for flicking from one short-form video to the next. An analysis of people’s attention while working on a computer found that they now switch between tabs or apps every 47 seconds, down from once every two and a half minutes in 2004. “I can imagine that if your body and your psychology are not trained for the duration of a feature-length film, it will just feel excruciatingly long,” USC’s Lippit said. (He also hypothesized that, because every movie is available on demand, students feel that they can always rewatch should they miss something—even if they rarely take advantage of that option.)

2026-02-15

Boy Kibble

Move Over, Girl Dinner. Boy Kibble Has Arrived. - The New York Times #food

Boy kibble — also known as “human kibble” since women eat it, too — is a ruthlessly efficient, male-coded rejoinder to the extemporaneous charms of “girl dinner.” The latter is a TikTok term for the assemblage of light bites that women sometimes cobble together and eat as a meal, with little care for gastronomic coherence. Boy kibble, in contrast, focuses on some nutritional ideal — here a mix of carbs, protein and fiber — that helps one achieve a specific body type or fitness goal. Pleasure-seeking details like flavor and aesthetics are tossed to the side.

Most nights, my dinner is a form of boy kibble, except I didn't make it myself. It's a Hot and Savoury meal packet from Huel. So I guess I can relate a bit.

“In contrast to girl dinner, which is fun, whimsical and creative,” said Ms. Bitar, boy kibble is not “focused on flavor, it’s not focused on joy. It’s focused on efficiency and results.”

2026-02-13

How Courtship Transformed Masculinity

How Courtship Transformed Masculinity - by Alice Evans #romance #relationships #culture #anthropology

Ask an economist what drives progress towards gender equality, they’ll probably emphasise sustained economic growth, contraceptives, and female employment. Talk to a political scientist, hear that it’s all about feminist activism. All valid, but I want to add a culture of female choice, male competition, and marital companionship.

While romantic love is experienced worldwide, there is enormous variation in the extent to which it is celebrated or suppressed. In regions where marriages are arranged by kin or coerced through brutal violence, her wants and welfare count for little. If divorce is stigmatised, she cannot credibly threaten exit and must then endure any abuse.

…

What follows is a speech I performed at my German friends’ wedding: two thousand years of history through the lens of marriage, starting with Ancient Rome to the Reformation, Wars of Religion and subsequent Romanticism, all the way to 1970s counter-cultural liberalisation.

Situationism - what people do is more often a function of their circumstances

Getting the Crab to the Beach - by Josh Zlatkus #evo-psych #evolution #psychology

A few months ago, I wrapped up a long series on human behavior arguing for situationism—the idea that what people do is more often a function of their circumstances than their character. I was pleased to discover afterwards that Angela Duckworth agrees.

In my musings on mental health, I frequently return to images of animals radically out of place: crabs in the canopy, otters in the desert, armadillos in the arctic. What would we learn by studying such animals? One obvious lesson is that if we wanted to help them, we should send them home. It would be a waste of time—or worse—to focus on what they were thinking, feeling, or doing, since these would be the downstream outputs of an animal in the wrong environment.

For example, we could draw all sorts of conclusions by watching a crab scrabble at the smooth surface of a tree branch. Perhaps there is food just under the bark. Maybe it’s a mating ritual or territorial instinct. The correct explanation, of course, would be that the crab is trying, unsuccessfully, to burrow in the sand. Yet we’d have a hell of a time figuring this out if we didn’t already know that crabs belong on the beach.

…

Humans, of course, are crabs in the canopy. We are vacuum cleaners on the roof. This modern world we have built, in the blink of an evolutionary eye, is not our ancestral home. So we live in many ways for which we were not designed—for example, in the constant presence of so many strangers. Yet few people carry this perspective with them, even into fields like therapy, where you might expect it to be foundational. The result is that when distress appears in its various confusing forms, therapists and laypeople rarely treat it as situational pathology—as the noxious byproduct of a poor fit between person and environment.

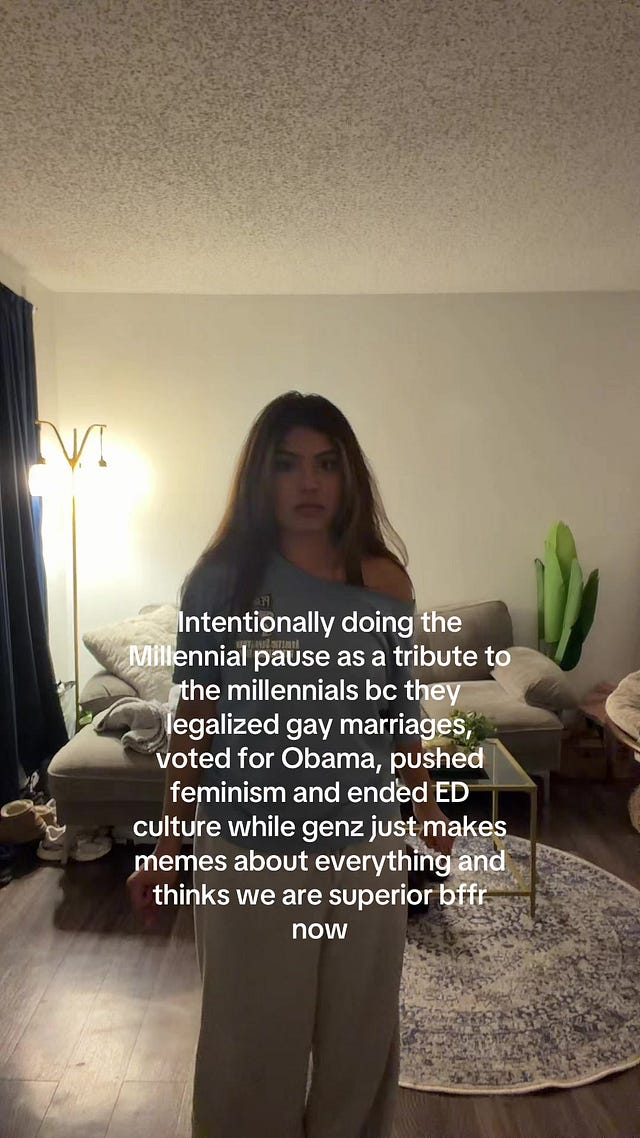

Millenials vs GenZ

Enjoyed this little dig at GenZ culture (as an elder millenial)

Won't Fix Self Help

"Won't Fix" self help #self-help #self-improvement

I can't read this article (members only!), but I love and wholeheartedly endorse the concept.

I'd like to propose a third option: the reasonable // rational recognition that most of your personal flaws are "Won't Fix" bugs, and the single most productive thing you can do about them is stop trying to patch them.

The self-help industry's entire business model depends on convincing you that every single bug in your system is fixable, that with the right framework, the right habits, the right coach, you'll finally refactor yourself into a clean, well-architected human being. But how many of your core personality traits have actually changed in the last decade?

The honest answer, for most people, is...very fucking few.

I also like the coding analogies used in the post 😊.

Self-improvement culture is a perpetual second-system rewrite of the self. You're constantly trying to architect Human 2.0, the version of you that's disciplined and calm and focused and doesn't check their phone 96 times a day (which is, by the way, the actual average for American adults, according to Asurion's widely cited research). But Human 2.0 never ships. You keep accumulating half-finished refactors and abandoned meditation streaks alongside a growing sense that something is fundamentally wrong with your willpower.

The alternative is the wrapper pattern. When you have a piece of legacy code that works but has an ugly interface, you don't rewrite it. You write a thin layer around it, a wrapper, that presents a clean interface to the rest of the system while leaving the messy internals untouched. The legacy code keeps doing what it always did, and the wrapper translates between the old system and the new requirements.

In Kazuo Ishiguro's The Remains of the Day Stevens, the butler, reflects on the decades he spent perfecting his professional dignity at the expense of, well, everthing else. His entire life was a refactoring project: eliminate the personal, optimize for service, become the ideal version of what a butler should be. By the end of the novel he's technically excellent and profoundly diminished. He optimized the wrong thing for forty years because he never stopped to ask whether the specification itself was flawed.

Won't Fix is the practice of questioning the specification. Most of the things you're trying to fix about yourself are only problems relative to some imagined ideal of a person you were never going to be. Your distractibility is a bug in the "focused knowledge worker" spec but might be a feature in the "person who notices interesting things and connects them unexpectedly" spec. Your sensitivity and your stubbornness, your tendency to monologue about niche topics at parties: all Won't Fix, and all load-bearing, and all probably okay in the big, heat-death-of-the-universe scheme of all things.

Stop trying to ship Human 2.0. Tag the bugs, write the wrappers, and get back to building something worth building. The most productive version of you probably looks a lot like the current version of you, plus a few well-placed adapter patterns and minus about thirty self-help books worth of guilt about not being somone else.

2026-02-11

LLM Information Inflation

This section from Brandur's latest newsletter made me laugh out loud

A conventional practice for execs at Snowflake was to send out what was called a “snippet”. Usually on a weekly cadence, these were emails containing personal notes on ongoing action and details on what their divisions were working on. The first thing you notice about “snippets” is the sheer volume of them – in the default set of on-boarding mailing lists you start getting them from every part of the organization on day one. The second thing you notice about snippets is their length – comprehensive detail, painstaking even. Essays once a week.

One might even say a suspicious amount of detail. Detail that includes a few too many tables, emoji, and emdashes. Yes, most of these were undoubtedly LLM-generated.

But LLM use isn’t just reserved for execs. In fact, Gemini was on by default, so everyone who received one of these long scrawls got a short, three point summary on top of it. The summary was so concise and so convenient that most recipients (including yours truly) read nothing further.

You have to step back and appreciate the absurdity of this situation. An executive enters three lines to produce a small novella which he then bulk emails to the rest of the organization. Receivers get an automatic three line summary that … looks a lot like what the sender wrote in the first place. The novella’s read by no one except a few stragglers who aren’t in on the joke yet. Is this progress?

There’s a punch line about information theory in here somewhere.

2026-02-10

Raw Japanese Denim Guide

Raw Japanese Denim: A Beginner's Guide #jeans #selvedge

Just putting it here for future reference. I currently own a pair of Nudie jeans made from Japanese raw denim, and another pair of bespoke Japanese raw denim jeans stitched at Monks of Method. Since over six months ago, I have only been alternating between these two jeans for my bottomwear. I haven't gone back to any of my other bottomwear in that time, no cap!

So I guess it will be a while before I have to go back and buy another pair of denims. Which is a bit of a bummer because some of these jeans look really cool. Maybe I can donate one of my jeans and get one of these instead, just to keep things fresh.

2026-02-04

Your Life is the Sum Total of 2,000 Mondays

Your Life is the Sum Total of 2,000 Mondays #life #finitude

We plan our lives like we're editing a movie trailer.

The trip to Portugal, or the product launch, or the transformation photo at the gym. The big moment where everything crystallizes into meaning. We accumulate these peaks in our imagination, and then arrange them into a montage that proves our existence mattered, and that we really lived.

Then we spend the actual substance of our lives doing laundry and feeling crappy about it...

If you work a standard career from twenty-five to sixty-five, you'll experience roughly 2,080 Mondays. That's 2,080 alarm clocks set against your biological preferences and 2,080 inbox avalanches, plus 2,080 instances of navigating traffic or public transit while still metabolically processing the weekend. Add in the Tuesdays through Fridays, and you're looking at roughly 10,400 ordinary workdays across a career. Meanwhile, if you're fortunate enough to take two weeks of vacation annually for forty years, you'll accumulate 560 vacation days. The ratio is roughly 19:1 in favor of the mundane.

So we get to a question worth sitting with:

Do you actually like your average Monday?

The psychologist Philip Zimbardo has a framework called "time perspective theory." People differ in how much mental weight they assign to past, present, and future. Future-oriented people tend to achieve more by conventional metrics, but they also exhibit a consistent pattern of sacrificing present satisfaction for hypothetical future rewards. When researchers follow these people over time, they find that the anticipated future keeps receding and the scaffolding remains permanent.

Seneca diagnosed this exact pathology in first-century Rome. He observed that people guard their property vigilantly but waste their time freely, treating it as an infinite resource. "You act like mortals in all that you fear, and like immortals in all that you desire," he wrote.

The barely tolerated Monday is a down payment on a life that never arrives, a perpetual advance payment for goods that don't ship.

human infohazards

human infohazards - by Adam Aleksic - The Etymology Nerd #linguistics #culture #social-media

Traditionally, we’ve used the model of a virus to describe how ideas spread. I’ve already written about memes as if they can “infect” new “hosts” along an epidemiological network, and we literally use the phrase “going viral” to describe internet popularity.

I don’t think the idea of viral memetics is quite right to describe what’s happening here, so I’ll be referring to these infohazards through the framework of parasitic memetics. Unlike a virus, which just replicates and moves on, the parasite lives inside the host of the internet, feeding on the resources of our attention. There is a clear formula to a parasitic meme:

- Do something terrible

- People criticize you, bringing you attention

- Attention brings profit and influence, making it easier to do more terrible things

- Repeat

And yet there’s a fundamental difference between this problem and the atomic bomb: one infohazard is an irrefutable fact of nature, and the other is entirely dependent on the current structure of social media platforms. Parasitic memes are only possible online because everything is optimized around attention metrics. Beyond easily circumventable terms of service, there is no measurement rewarding kindness or social cohesion. This means that, if you disregard your own morality, the internet becomes a game you can optimize, where you “win” through any content possible, especially if someone criticizes you.

Parasitic memes are uniquely enabled by the ease of distribution. Newspapers and television channels had plenty of problems, but at least those forms of media had institutional gatekeepers preventing obviously evil content from being transmitted. Those barriers are now gone, and more people are finding out that they can use the disconnect to their advantage.